Connection to Databricks

To connect a Databricks lakehouse to the DQC Platform, you’ll need to collect a few key pieces of configuration. This guide walks you through all required values, token creation, and permission setup.

Required connection details

Field | Example | Description |

|---|---|---|

Name |

| Any internal name for this connection |

Host |

| Log into Databricks and copy the host from the URL: |

Token (dev) |

| Developer token — see steps below for generating access |

Service Principal (prod) | Client ID: | In Azure Databricks: User icon > Settings > Identity and Access > Service Principals |

Cluster ID |

| In Databricks, go to Clusters, open one, and extract the ID from the URL |

Catalog |

| Catalog that contains your target schema and tables |

Schema |

| Schema to which you want to connect |

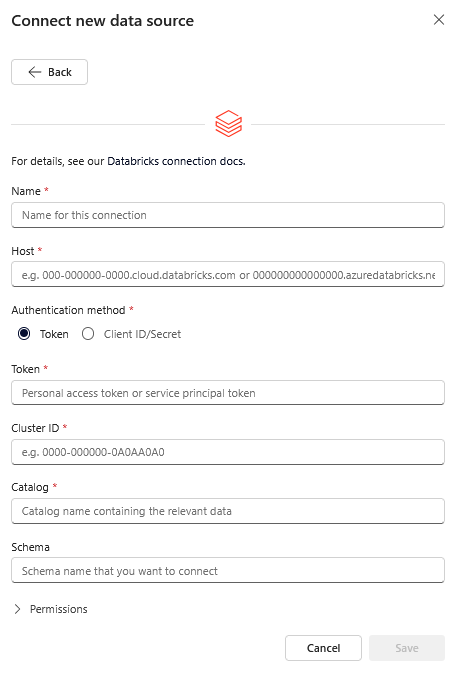

Enter the connection values in the integration form shown above

Enter the connection values in the integration form shown above

Create a Databricks access token

The DQC Platform requires an access token to connect. We recommend using a service principal.

Option 1: Service principal (recommended)

Create a new service principal for the DQC Platform

Use the Databricks API to create a principal and note the Application ID. Instructions (for Azure Databricks, see here).Grant token usage to the service principal

In your Databricks workspace, allow the principal to use tokens. InstructionsGenerate an access token

Create a token and set"lifetime_seconds": nullfor uninterrupted access

Store the token securely. Instructions

Option 2: User-based token (for development)

Navigate to User Settings > Developer Tools in Databricks

Generate a personal access token

Store it securely for use in the DQC Platform

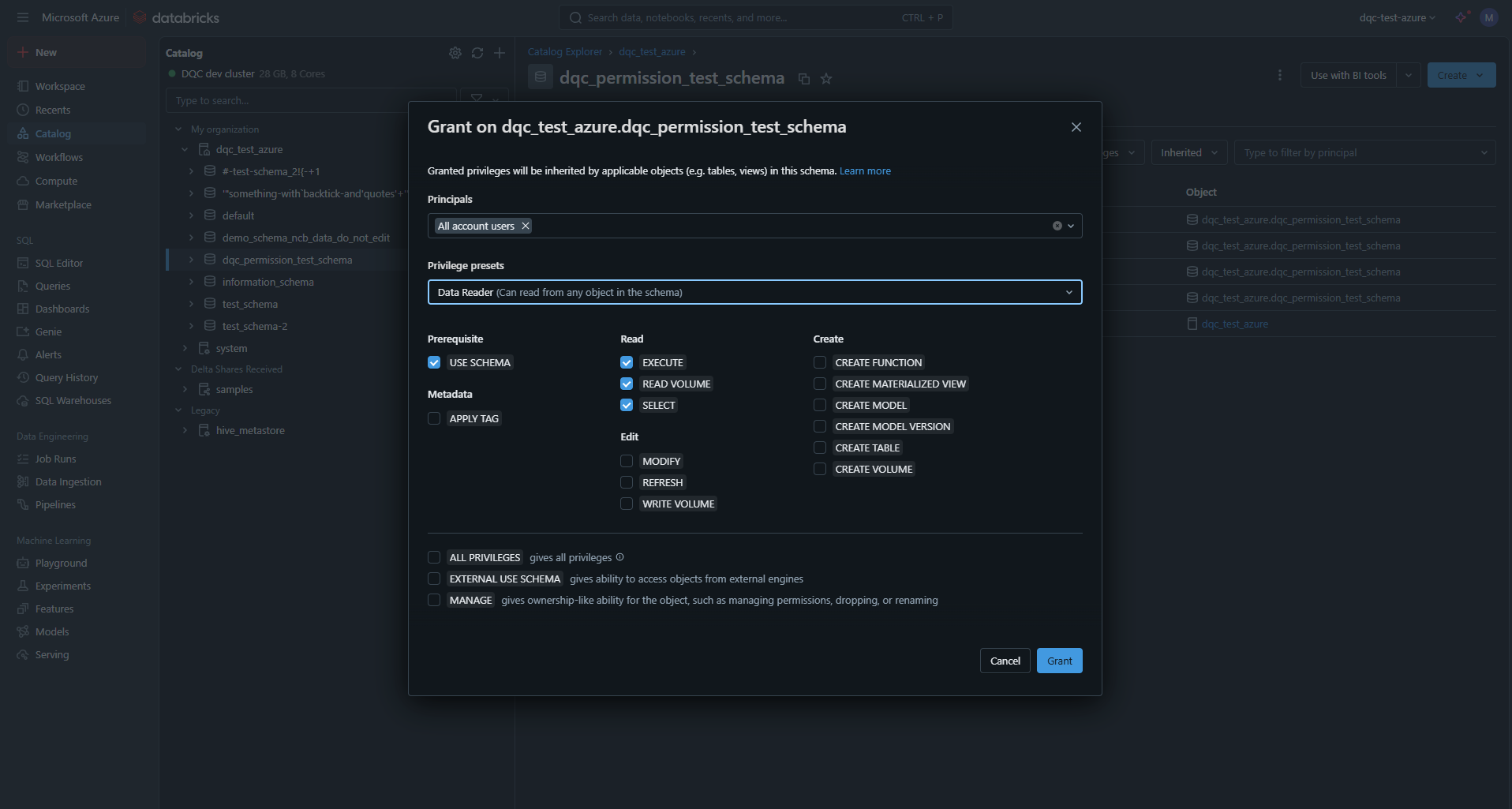

Grant access to the target schema

To allow the DQC Platform to read data, grant the Data Reader role to the relevant user or service principal on the desired schema.

Also, Databricks SQL Warehouse connections require to create temporary in-memory tables via:

GRANT CREATE VOLUME ON SCHEMA <schema> TO <service_principal>;or:

GRANT CREATE VOLUME ON CATALOG <catalog> TO <service_principal>;  Set schema-level read permissions in Unity Catalog

Set schema-level read permissions in Unity Catalog

Databricks Unity Catalog Permission Requirements

Why Does DQC.ai Need CREATE VOLUME Permission?

When using DQC.ai with Databricks Unity Catalog, your technical service user requires the CREATE VOLUME permission.

This is purely a technical requirement for query execution and does NOT pose any security risk to your data.

What This Permission Is Used For

Temporary Query Staging Only

The CREATE VOLUME permission is used exclusively for creating temporary staging areas during query execution. Here's what happens:

During Query Execution: When DQC.ai processes your data, the connector needs to temporarily stage intermediate results and in-memory data structures

Temporary Volumes Are Created: These volumes act as temporary scratch space for query execution - similar to temp tables, but for file-based operations

Automatic Cleanup: These temporary volumes are automatically cleaned up after query execution completes

What This Permission Does NOT Do

Does NOT grant access to read your existing data

Does NOT grant access to read your existing data Does NOT allow permanent modifications to your data

Does NOT allow permanent modifications to your data Does NOT grant access to other catalogs, schemas, or tables

Does NOT grant access to other catalogs, schemas, or tables Does NOT bypass your existing data access controls

Does NOT bypass your existing data access controls

Technical Background

Why Not Just Use Read Permissions?

You might wonder: "If DQC.ai only reads data, why does it need CREATE VOLUME?"

The Databricks SQL connector (unlike PySpark) cannot directly execute queries on pure in-memory data structures. When the DQC connector needs to:

Process DataFrames or tables

Create temporary lookup tables for joins

Stage intermediate results during complex transformations

...it must materialize this data in a location that Databricks compute can access. Unity Catalog Volumes are the governed mechanism for this file-based staging.

Allow static IP access

The DQC Platform connects using static IP addresses. Ensure that these are whitelisted in your environment:

3.123.94.228 Notes

Notes

For most production environments, we strongly recommend using a service principal

The connection is read-only and encrypted

Learn more: Supported data sources, Azure SQL connection, Connection to Snowflake