DQC Whitepaper

AI agents for data quality and improvement

For decades, enterprises have known their data was flawed; yet the tools to fix it have always fallen short. Missing fields, duplicates, invalid addresses, and inconsistent formats continue to undermine analytics and operations. Rule-based systems are brittle, manual cleansing is costly, and traditional machine learning can detect issues but not resolve them.

Our new whitepaper “Why Agentic Workflows Are the Breakthrough Enterprise Data Quality Has Been Waiting For” explains how this is finally changing.

The Fundamental Problem: Why Data Quality Has Resisted Automation

Data quality sits at the intersection of three challenges: technical complexity, semantic ambiguity, and scale. Data lives across heterogeneous systems—ERP platforms, data warehouses, APIs, and data lakes. Determining whether data is “correct” often requires human domain knowledge. And the sheer variety of possible issues makes manual rule-writing impossible.

The Agentic Breakthrough: Reasoning at Scale

Agentic workflows combine large language models with tool access, multi-step reasoning, and autonomous decision-making. They pursue goals (“enrich missing customer data”), break them down into subtasks, execute plans, validate outcomes, and learn from feedback. This introduces human-like reasoning into scalable automation—transforming how data quality can be managed.

From Finding to Improving – The Two-Stage Workflow

Stage one identifies issues through statistical profiling, machine learning, and LLM-powered contextualization. Stage two is where the system acts: agents select appropriate remediation strategies, use internal or external tools, validate results, and refine them iteratively. The result is a continuous, intelligent loop—from detection to improvement.

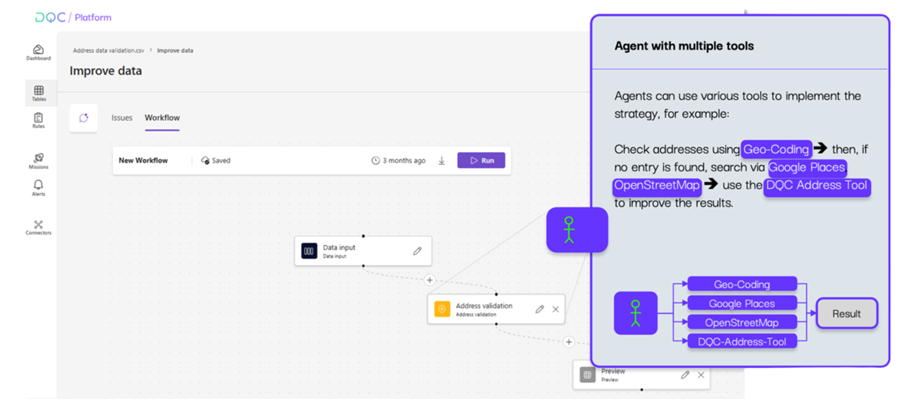

Concrete example from the DQC Platform (see graphic):

An agent cleans address records with a multi-step tool plan. It first checks entries via Geo-Coding. If no result is found—or confidence is too low—it automatically escalates: Google Places → OpenStreetMap → DQC Address Tool. After each step, the agent validates country-specific formats and coordinates, applies confidence thresholds, logs decisions, and only writes back when all validations pass. The result is a robust, reusable “Find → Improve” loop that handles address edge cases at scale with full auditability.

An agent cleans address records with a multi-step tool plan. It first checks entries via Geo-Coding. If no result is found—or confidence is too low—it automatically escalates: Google Places → OpenStreetMap → DQC Address Tool. After each step, the agent validates country-specific formats and coordinates, applies confidence thresholds, logs decisions, and only writes back when all validations pass. The result is a robust, reusable “Find → Improve” loop that handles address edge cases at scale with full auditability.

Architecture for Production – Beyond the Demo

Turning this vision into a production system requires engineering rigor. DQC.ai’s architecture unifies access across diverse data sources, manages large-scale context efficiently, scales economically, and ensures reliability through validation frameworks, confidence scoring, and audit trails.

Why Now – The Convergence

Agentic data quality is only possible today because of the convergence of key technologies: advanced LLMs, robust agent frameworks, high-performance vector databases, and modern enterprise data platforms. Together, they create capabilities that didn’t exist just a few years ago.

Why We’re Building in the Data Quality Market

The shift from detection to remediation expands the market dramatically. Agentic systems reduce implementation barriers, interpret data quality requirements in natural language, and enable complex, previously “unautomatable” use cases like semantic enrichment and multi-source reconciliation.

Real-World Impact

What this looks like in practice - from standardizing millions of addresses to intelligently deduplicating records, and enriching product data from unstructured sources - is demonstrated in the whitepaper with concrete projects. The outcomes are striking and make the potential of agentic workflows real. See the case studies at the end for more.